Table of contents

TDD anti-patterns - episode 6 - The one, The peeping Tom, The Flash and The Jumper - with code examples in java

The content here is under the Attribution 4.0 International (CC BY 4.0) license

Join Our Community

Connect with developers, architects, and tech leads who share your passion for quality software development. Discuss TDD, architecture, software engineering, and more.

→ Join SlackThis is a follow up on a series of posts around TDD anti-patterns. The first of this series covered the liar, excessive setup, the giant and slow poke, those four are part of 22 more anti-patterns formalized in the James Carr post and discussed in a Stack Overflow thread.

Do you prefer this content in video format? The video is available on demand in livestorm.

In this blog post we are going to focus on the last two anti-patterns from those lists and also two new ones: the flash and the jumper. Both of them are focused on the practice of test driving code instead of the pure code itself.

Takeaways

- The one is an aggregation of anti-patterns

- Having to deal with global state during test execution brings complexity

- Most of the anti-patterns covered in this series are related to coding, the flash focuses on the practice of writing tests

- The jumper is related to learning how to test drive code

How Episode 6 Closes the Circle: The One combines The Giant (Episode 1) and The Free Ride (Episode 5). The Peeping Tom extends The Inspector (Episode 2)—both expose internals. The Flash and The Jumper are practice-focused, addressing the red-green-refactor cycle itself rather than specific code patterns.

The one

Despite the name, The one is the anti-pattern that aggregates different anti-patterns, by definition it is related to The Giant and The Free Ride. So far, we have seen that the anti-patterns are usually combined and it is not easy to draw a clear line when one ends and the other starts.

As we already saw, The Giant appears when a test case tries to do everything at once, in a single test case, in the first episode we saw a nuxtjs snippet that fit the the giant definition.

The following snippet, extracted from the xUnit Patterns book (Meszaros, 2007), demonstrates the giant anti-pattern. Although it has fewer lines than the first episode example, the test exercises all methods at once:

public void testFlightMileage_asKm2() throws Exception {

// set up fixture

// exercise constructor

Flight newFlight = new Flight(validFlightNumber);

// verify constructed object

assertEquals(validFlightNumber, newFlight.number);

assertEquals("", newFlight.airlineCode);

assertNull(newFlight.airline);

// set up mileage

newFlight.setMileage(1122);

// exercise mileage translator

int actualKilometres = newFlight.getMileageAsKm();

// verify results

int expectedKilometres = 1810;

assertEquals( expectedKilometres, actualKilometres);

// now try it with a canceled flight

newFlight.cancel();

try {

newFlight.getMileageAsKm();

fail("Expected exception");

} catch (InvalidRequestException e) {

assertEquals( "Cannot get cancelled flight mileage",

e.getMessage());

}

}

The comments even give us a hint on how to split the single test case into multiple ones. Likewise, the free ride also can be noted in this example, as for each setup, there are assertions that follow.

The one - root causes

- Not refactoring tests as code evolves—allowing a single test method to grow over time

- Lack of test decomposition—not breaking complex tests into focused units

- Copy-paste testing strategy—duplicating setup and assertions rather than extracting patterns

The next code example might be clearer to see when the free ride appears. As already depicted in the previous episode, the example that follows was extracted from the jenkins repository. In this case, the mix between the free ride and the giant is a bit blurry, but still, it is easy to spot that a single test case is doing too much.

public class ToolLocationTest {

@Rule

public JenkinsRule j = new JenkinsRule();

@Test

public void toolCompatibility() {

Maven.MavenInstallation[] maven = j.jenkins.getDescriptorByType(Maven.DescriptorImpl.class).getInstallations();

assertEquals(1, maven.length);

assertEquals("bar", maven[0].getHome());

assertEquals("Maven 1", maven[0].getName());

Ant.AntInstallation[] ant = j.jenkins.getDescriptorByType(Ant.DescriptorImpl.class).getInstallations();

assertEquals(1, ant.length);

assertEquals("foo", ant[0].getHome());

assertEquals("Ant 1", ant[0].getName());

JDK[] jdk = j.jenkins.getDescriptorByType(JDK.DescriptorImpl.class).getInstallations();

assertEquals(Arrays.asList(jdk), j.jenkins.getJDKs());

assertEquals(2, jdk.length); // JenkinsRule adds a 'default' JDK

assertEquals("default", jdk[1].getName()); // make sure it's really that we're seeing

assertEquals("FOOBAR", jdk[0].getHome());

assertEquals("FOOBAR", jdk[0].getJavaHome());

assertEquals("1.6", jdk[0].getName());

}

}

The peeping Tom

Having to deal with global state in test cases is something that brings more complexity, for example, it requires a proper clean up before each test and even after each test case is executed in a way to avoid side effects.

The peeping Tom depicts the issue that using global state brings during test execution, in stack overflow, there is a thread dedicated to this subject which has a few comments that help to understand better what it is. Christian Posta also blogged about static methods being code smells.

In there, there is a snippet that was extracted from this blog post that depicts how the use of singleton and static properties can harm the test case and keep state between tests. Here, we are going to use the same example with minor changes to make the code to compile.

The idea behind the singleton is to create and reuse a single instance from any kind of object. So to achieve that, we can create a class (in this example called MySingleton) and block the creation of an object through its constructor and allow only the creation inside the class, controlled by the method getInstance:

public class MySingleton {

private static MySingleton instance;

private String property;

private MySingleton(String property) {

this.property = property;

}

public static synchronized MySingleton getInstance() {

if (instance == null) {

instance = new MySingleton(System.getProperty("com.example"));

}

return instance;

}

public Object getSomething() {

return this.property;

}

}

When it comes to testing, there is not much to deal with. So, for example, the method exposed in the MySingleton called getSomething can be invoked and asserted against a value as shown in the following snippet:

import org.junit.jupiter.api.Test;

import static org.assertj.core.api.Assertions.assertThat;

class MySingletonTest {

@Test

public void somethingIsDoneWithAbcIsSetAsASystemProperty(){

System.setProperty("com.example", "abc");

MySingleton singleton = MySingleton.getInstance();

assertThat(singleton.getSomething()).isEqualTo("abc");

}

}

A single test case will pass without any problem. The test case creates the singleton instance and invokes the getSomething to retrieve the property value defined when the test was defined. The issue arises when we try to test the same behavior but with different values in the System.setProperty.

import org.junit.jupiter.api.Test;

import static org.assertj.core.api.Assertions.assertThat;

class MySingletonTest {

@Test

public void somethingIsDoneWithAbcIsSetAsASystemProperty(){

System.setProperty("com.example", "abc");

MySingleton singleton = MySingleton.getInstance();

assertThat(singleton.getSomething()).isEqualTo("abc");

}

@Test

public void somethingElseIsDoneWithXyzIsSetAsASystemProperty(){

System.setProperty("com.example", "xyz");

MySingleton singleton = MySingleton.getInstance();

assertThat(singleton.getSomething()).isEqualTo("xyz");

}

}

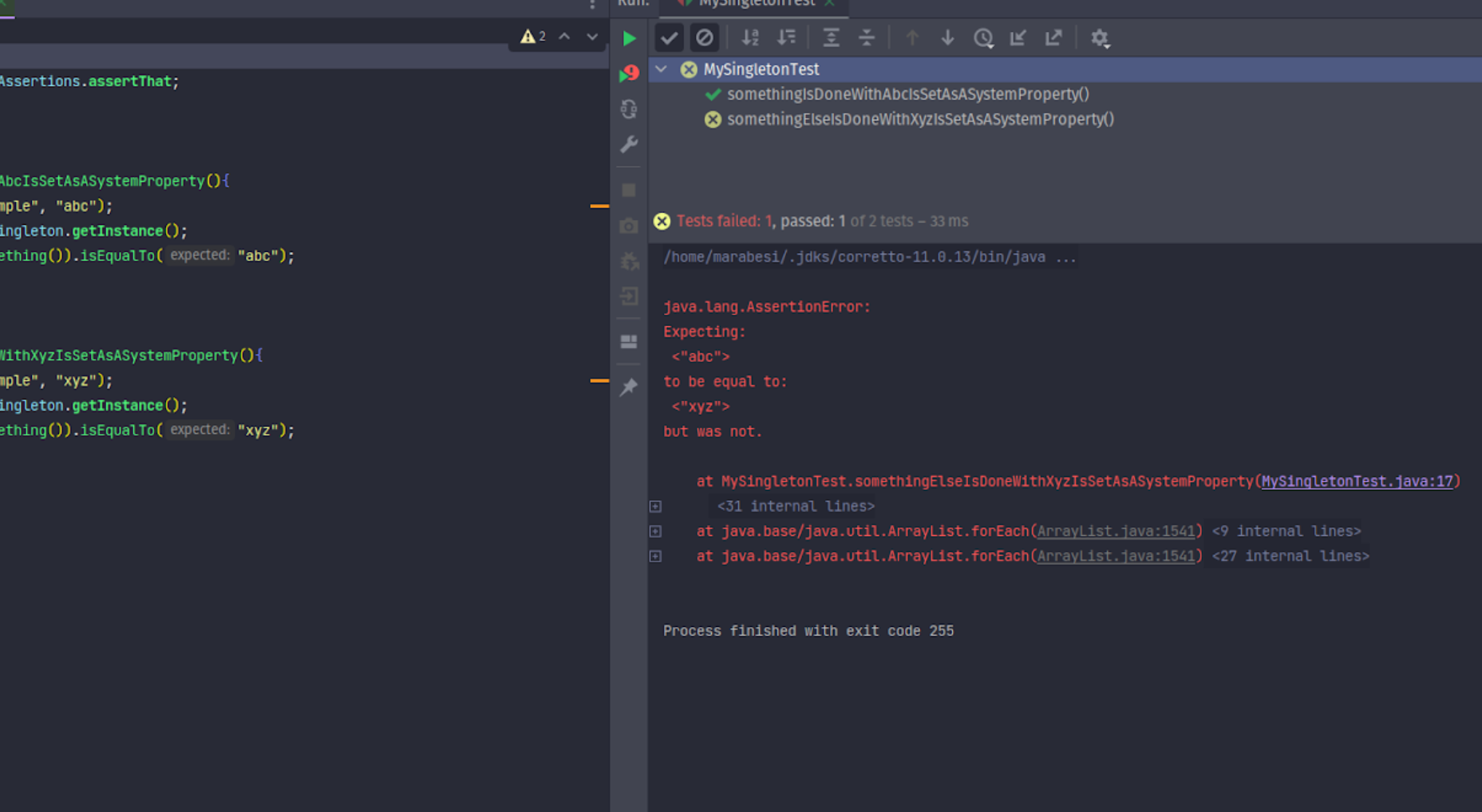

The second test will fail because the singleton instance retains the value abc from the first test:

As the singleton guarantees only one instance from a given object, during the test execution, the first test that is executed, creates the instance and for the following executions it reuses the instance previously created.

This is easy to see with serial test execution, but in test frameworks that execute tests in parallel or don’t guarantee order, failures become harder to diagnose.

Since the singleton’s instance property is private, it cannot be reset without modifying production code. One approach is to use reflection to reset the property before each test:

class MySingletonTest {

@BeforeEach

public void resetSingleton() throws SecurityException, NoSuchFieldException, IllegalArgumentException, IllegalAccessException {

Field instance = MySingleton.class.getDeclaredField("instance");

instance.setAccessible(true);

instance.set(null, null);

}

}

Using the @BeforeEach annotation, we reset the instance before each test. While using reflection to reset a property may seem acceptable, it introduces extra code and potential side effects. Additionally, when production code depends on a singleton, testing becomes even more challenging.

As Rodney Glitzel noted, the problem isn’t the singleton itself, but code that depends on it. Such coupling makes code harder to test.

The peeping Tom - root causes

- Overuse of singletons and static state—creates global mutable state that persists between tests

- Insufficient test isolation—shared state not reset between test execution

- Inadequate dependency injection—forcing tests to manipulate global state instead of injecting dependencies

The flash

The Flash occurs when developers new to TDD rush ahead before mastering the test-first flow, focusing prematurely on edge cases rather than incremental progress.

Besides that, the flash also happens as a consequence of the following:

Big steps

Taking significant steps at once is often the first barrier to adopting TDD. What constitutes a small step is somewhat subjective, but minimizing effort to write tests is a learnable skill. In TDD by Example, Kent Beck describes baby steps as follows:

Test methods should be easy to read, pretty much straight line code. If a test method is getting long and complicated, then you need to play “Baby Steps.” TDD by Example (Beck, 2000)

Focus on generalization

Another approach that usually leads to a blocker on the TDD flow is trying to generalize too much from the start and not talk with the test (or the production code).

The Flash Example: Rushing Ahead

The Flash often manifests in code like this—attempting to build a complete feature before seeing a failing test:

// THE FLASH: Writing too much production code at once

function calculateDiscount(customer, cartTotal) {

let discount = 0;

if (customer.isPremium) {

discount = 0.2;

} else if (customer.isRegular && cartTotal > 100) {

discount = 0.1;

} else if (cartTotal > 500) {

discount = 0.15;

}

if (customer.loyaltyPoints > 100) {

discount += 0.05;

}

return cartTotal * (1 - discount);

}

// Test tries to cover all paths at once

test('calculateDiscount handles all cases', () => {

expect(calculateDiscount({ isPremium: true }, 50)).toBe(40);

expect(calculateDiscount({ isRegular: true }, 150)).toBe(135);

expect(calculateDiscount({}, 600)).toBe(510);

expect(calculateDiscount({ loyaltyPoints: 150 }, 100)).toBe(95);

expect(calculateDiscount({ isPremium: true, loyaltyPoints: 150 }, 100)).toBe(76);

});

The problem: The implementation tries to handle all edge cases. The test is monolithic. If the test fails, which case failed? Where do you start refactoring?

The incremental TDD approach:

// STEP 1: RED - Write the simplest failing test

test('should apply no discount for basic customer', () => {

const result = calculateDiscount({ }, 100);

expect(result).toBe(100);

});

// STEP 2: GREEN - Hardcode the minimal implementation

function calculateDiscount(customer, cartTotal) {

return cartTotal;

}

// STEP 3: REFACTOR - Improve the code (no new functionality yet)

// STEP 4: RED - Add the next test case

test('should apply premium discount', () => {

const result = calculateDiscount({ isPremium: true }, 100);

expect(result).toBe(80); // 20% discount

});

// STEP 5: GREEN - Make this test pass

function calculateDiscount(customer, cartTotal) {

if (customer.isPremium) {

return cartTotal * 0.8;

}

return cartTotal;

}

// Continue: RED → GREEN → REFACTOR for each additional rule

This incremental approach keeps each step small, focused, and verifiable. When a test fails, you know exactly what behavior broke.

The flash - root causes

- Overambitious first steps—attempting to write complete implementations before seeing tests fail

- Lack of TDD discipline—developers familiar with “write code first” struggle with incrementalism

- Pressure to deliver—rushing feature implementation encourages skipping the incremental approach

The jumper

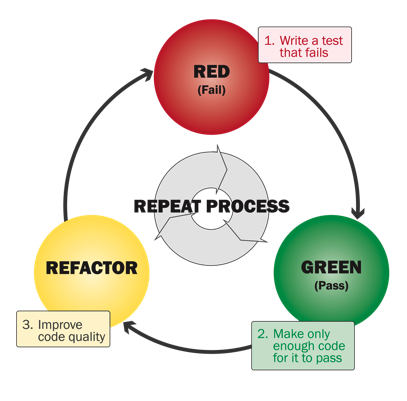

The Jumper is the practice of attempting test-driven development while skipping steps in the red-green-refactor cycle.

The classic TDD approach is: write a failing test (red), write code to pass it (green), then improve the code (refactor) (Beck, 2000). In Tech Lead Journal episode 90, Uncle Bob notes he often repeats red-green multiple times before the refactor phase.

The refactor phase is optional, unlike red-green. Refactoring is less frequent when starting TDD but becomes essential as code evolves and requirements change.

Following the TDD rules strictly usually requires making assumptions that can make the flow difficult or lead to skipping steps. Avoiding these pitfalls will help:

Red

- Trying to make it “perfect” from the start

- Get blocked by not knowing what to code

- Refactor on the red

- Changing class name

- Fixing styles (any kind of style)

- Changing files that are not related to the test

Green

- Not trusting the hard coded (“I know it will pass”)

Refactor

- Making changes that break the tests

Ultimately, mastering TDD requires practice. If you’ve practiced TDD, you’ve likely encountered these issues. Remember to follow the red-green-refactor cycle as strictly as possible.

The jumper - root causes

- Unclear red-green-refactor cycle understanding—skipping phases to save perceived time

- Refactoring during red phase—changing production code or class structure before the test passes

- Insufficient discipline—stopping green phase too early or refactoring carelessly in the refactor phase

References

- Meszaros, G. (2007). xUnit Test Patterns - Refactoring test code. Addison Wesley.

- Beck, K. (2000). TDD by example. Addison-Wesley Professional.

Appendix

Edit May 26, 2022 - Codurance talk

Presenting the tdd anti-patterns episode 6 at Codurance.

Changelog

- Feb 15, 2026 - Grammar fixes and minor rephrasing for clarity

About this post

This post content s was assisted by AI, which helped with research, curate content and code suggestions.